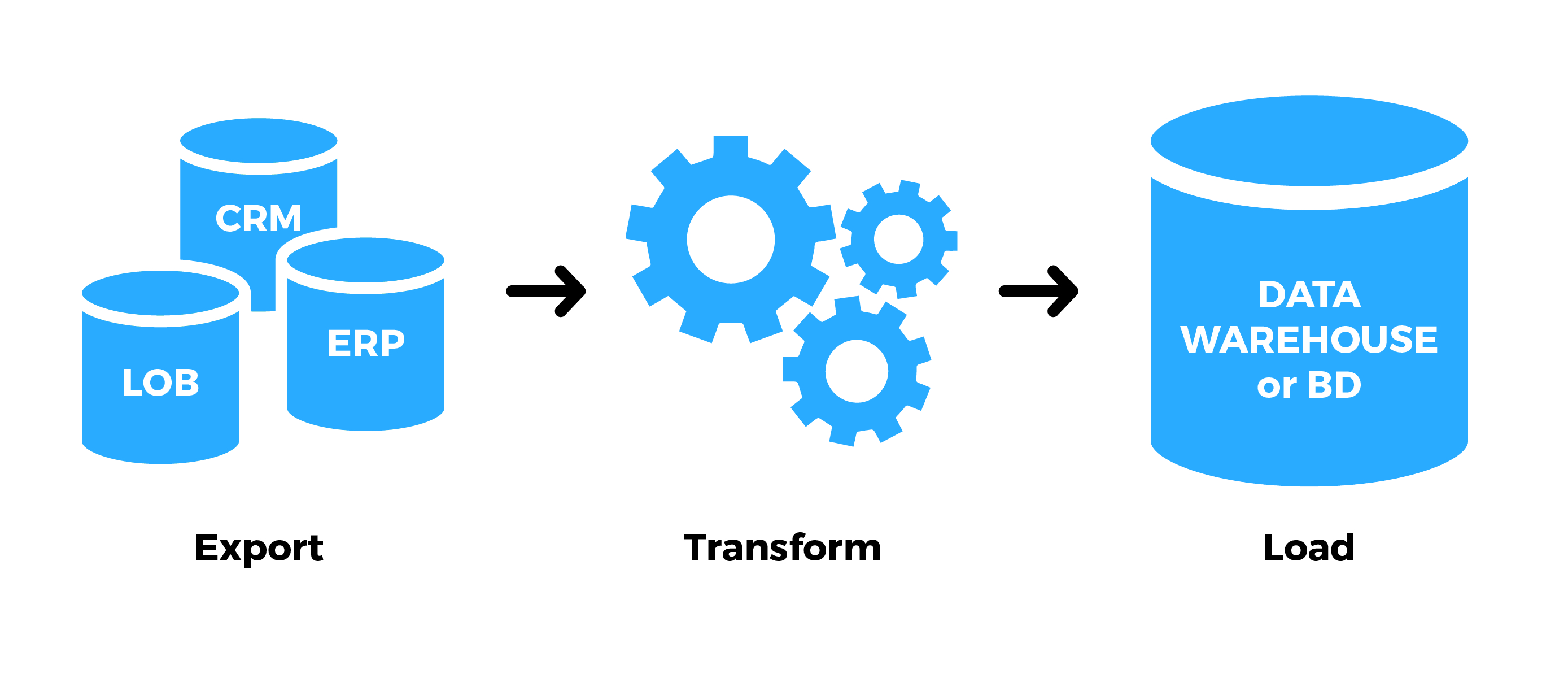

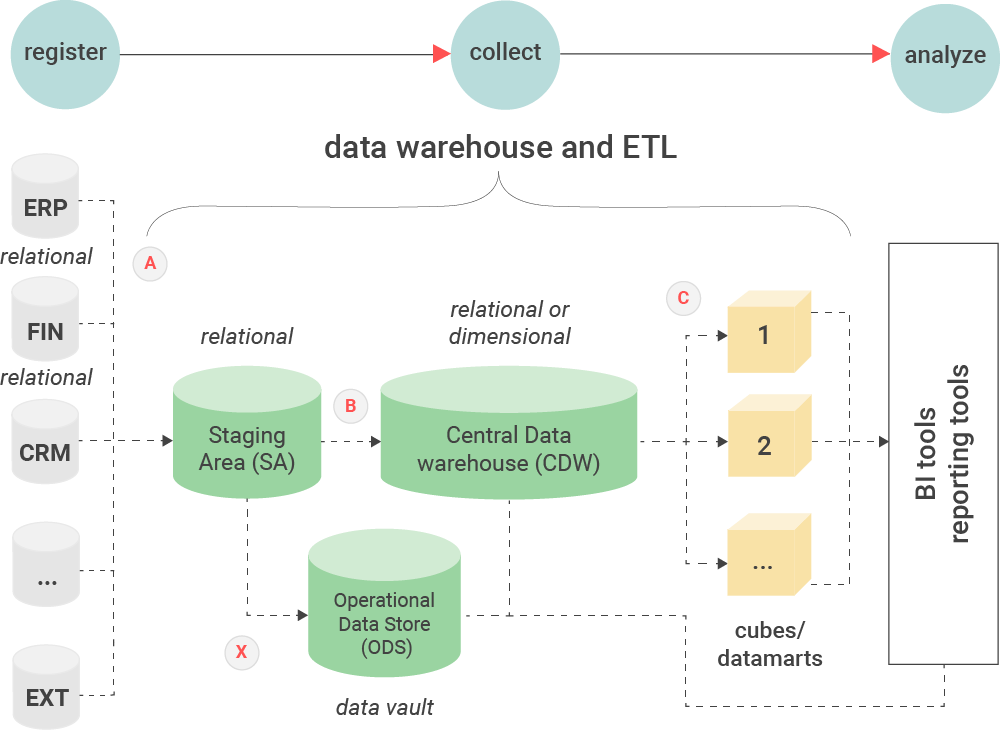

SELECT SupplierName, City, Country FROM Suppliers INSERT INTO Customers (CustomerName, City, Country) A SQL tutorial is beyond the scope of this post, but an ETL example of SQL might include the following: The language contains a vast number of commands for manipulating databases. SQL is relied upon heavily when constructing ETL pipelines. Python is a versatile language that can be used for many different applications, but has a number of useful modules for handling databases, and thus finds popular use in ETL. SQL (pronounced: “sequel) is a query language used to search and modify databases. Building an ETL pipeline from scratch often requires a number of different pieces of software, but the most popular tools are probably the programming languages used to construct and connect these together: SQL and Python. ELT.Īn ETL tutorial wouldn’t be complete without mentioning ETL tools. You can read more about this in our blog post on ETL vs. When designing your architecture, you also need to decide whether you’re using ETL or ELT. The more information your ETL architecture provides, the better. This includes the methodology used to transfer data, the transformation rules, and the tools and programming languages used. As you can see, ETL is far from simple, and we’ve only scratched the surface here.ĮTL architecture is essentially a blueprint for your ETL process, showing how it works from beginning to end in a step-by-step manner. Considerations at this stage include cataloging, maintenance, archiving, and data governance. This often begins with a ‘full loading’, which includes all data, followed by regular ‘incremental loading’ of any differences. ‘Loading’ is the final phase of ETL, where the transformed data is transferred into the target destination, which is usually a data warehouse or data lake. These include cleansing, standardization, verification, formatting and sorting, labeling, and protection. Rules are applied to prepare the extracted data for its purpose. This involves a huge number of considerations, such as differences in time zones, backfilling of failed sources or missing data, handling different APIs, and compliance with data security.ĭuring the ‘transform’ phase, the raw data is converted into foundational data. It’s essential to get the extraction stage right so that the rest of the data pipeline functions as expected. ‘Extract’ is the first stage, in which the raw data is moved or copied from its original locations into interim storage. ETL is necessary to unify and harness data, ready for it to be used for analytical purposes. Here’s a brief introduction on how to use it to harness your organization’s data.ĮTL stands for ‘Extract’, ‘Transform’, and ‘Load’, meaning the extraction of raw data (usually from multiple sources), transformation of that into foundational data, and the loading of the finished dataset into a destination (usually a data warehouse).ĭata is a crucial and growing resource in the modern world, and businesses who fail to capitalize on it effectively stand to lose out. The term ETL seems simple, but can be tricky to implement in practice.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed